Ave AI is a fast-growing data startup turning messy enterprise signals into AI-driven analytics and intelligent decisioning for modern data teams.

Builds AI-driven data products on Doris with fast SQL access for ad-hoc exploration and reporting.

Search Database

Search DatabaseApache Doris is an open-source, real-time database for modern analytics and AI. Run it shared-nothing on bare metal, or disaggregated on cloud object storage.

Ship sub-second, interactive analytics straight to your customers at scale.

Learn moreOne real-time warehouse for analytics across every business domain.

Learn moreHigh-throughput log and metric analytics for monitoring and incident response.

Learn moreVector, text, and JSON search unified in SQL, built for AI agents and RAG.

Learn moreTrusted by 10,000+ users

Ave AI is a fast-growing data startup turning messy enterprise signals into AI-driven analytics and intelligent decisioning for modern data teams.

Builds AI-driven data products on Doris with fast SQL access for ad-hoc exploration and reporting.

Baidu is China's largest search engine and a leading AI company, powering products from ERNIE large language models to Apollo autonomous driving.

Powers large-scale log analytics and ad-hoc SQL exploration for engineering and platform observability workflows.

MiniMax is a top Chinese AI unicorn building multimodal foundation models behind hit consumer apps like Talkie and Hailuo, serving tens of millions of users worldwide.

Powers product usage, training pipeline, and inference observability analytics for foundation model workloads.

Zhipu AI is one of China's leading large-model labs and a Tsinghua spinout, building the GLM and ChatGLM foundation models that power chatbots and AI agents at scale.

Uses Apache Doris to analyze model training metrics, usage events, and platform telemetry at scale.

miHoYo, known globally as HoYoverse, is a leading game studio behind blockbuster franchises Genshin Impact, Honkai Star Rail, and Zenless Zone Zero.

Analyzes player behavior, in-game economy, and live operations data with Doris for fast iteration.

Xiaomi is a global top-three smartphone maker and a leading consumer-electronics and smart-home brand, with growing reach into electric vehicles.

Runs interactive analytics on device, app, and service data so operations teams can monitor business health live.

BYD is the world's largest electric-vehicle maker and a top-tier lithium-battery manufacturer, outselling Tesla globally and shipping millions of EVs and hybrids each year.

Powers EV telemetry, supply chain, and after-sales analytics with fast SQL access for product teams.

Ford Motor Company is one of the world's largest automakers, building iconic vehicles like the F-Series pickup, Mustang, and a growing lineup of electric models.

Builds unified analytics over vehicle, dealer, and service data to support connected mobility programs.

JD.com is China's largest retailer by revenue and a Fortune Global 500 top-50 company, operating one of the world's most extensive self-built logistics networks.

Serves retail analytics for order, inventory, and marketing data with fast SQL access for business users.

Kuaishou is one of the world's largest short-video and live-streaming platforms, with more than 700 million monthly active users across China and its international Kwai app.

Powers short-video content and creator analytics with sub-second response over massive engagement event streams.

Li-Ning is a leading Chinese sportswear powerhouse founded by the Olympic gymnast, fusing performance gear with streetwear culture across thousands of stores nationwide.

Powers store, e-commerce, and supply chain analytics with Doris for daily and real-time decision making.

Luckin Coffee is the world's largest coffee chain by store count, with more than 31,000 outlets serving tens of millions of daily customers.

Uses Doris for store, order, and membership analytics to track campaign performance across thousands of outlets.

Meituan is China's largest local-services platform, connecting hundreds of millions of users with food delivery, travel, and on-demand retail every day.

Runs city-scale business analytics over orders, merchants, riders, and user activity for live decision support.

MINISO is a fast-growing global lifestyle and IP retailer, operating over 8,000 stores across more than 110 countries and regions worldwide.

Powers SKU-level sales, inventory, and supply-chain reporting across global retail operations.

Ave AI is a fast-growing data startup turning messy enterprise signals into AI-driven analytics and intelligent decisioning for modern data teams.

Builds AI-driven data products on Doris with fast SQL access for ad-hoc exploration and reporting.

Baidu is China's largest search engine and a leading AI company, powering products from ERNIE large language models to Apollo autonomous driving.

Powers large-scale log analytics and ad-hoc SQL exploration for engineering and platform observability workflows.

MiniMax is a top Chinese AI unicorn building multimodal foundation models behind hit consumer apps like Talkie and Hailuo, serving tens of millions of users worldwide.

Powers product usage, training pipeline, and inference observability analytics for foundation model workloads.

Zhipu AI is one of China's leading large-model labs and a Tsinghua spinout, building the GLM and ChatGLM foundation models that power chatbots and AI agents at scale.

Uses Apache Doris to analyze model training metrics, usage events, and platform telemetry at scale.

miHoYo, known globally as HoYoverse, is a leading game studio behind blockbuster franchises Genshin Impact, Honkai Star Rail, and Zenless Zone Zero.

Analyzes player behavior, in-game economy, and live operations data with Doris for fast iteration.

Xiaomi is a global top-three smartphone maker and a leading consumer-electronics and smart-home brand, with growing reach into electric vehicles.

Runs interactive analytics on device, app, and service data so operations teams can monitor business health live.

BYD is the world's largest electric-vehicle maker and a top-tier lithium-battery manufacturer, outselling Tesla globally and shipping millions of EVs and hybrids each year.

Powers EV telemetry, supply chain, and after-sales analytics with fast SQL access for product teams.

Ford Motor Company is one of the world's largest automakers, building iconic vehicles like the F-Series pickup, Mustang, and a growing lineup of electric models.

Builds unified analytics over vehicle, dealer, and service data to support connected mobility programs.

JD.com is China's largest retailer by revenue and a Fortune Global 500 top-50 company, operating one of the world's most extensive self-built logistics networks.

Serves retail analytics for order, inventory, and marketing data with fast SQL access for business users.

Kuaishou is one of the world's largest short-video and live-streaming platforms, with more than 700 million monthly active users across China and its international Kwai app.

Powers short-video content and creator analytics with sub-second response over massive engagement event streams.

Li-Ning is a leading Chinese sportswear powerhouse founded by the Olympic gymnast, fusing performance gear with streetwear culture across thousands of stores nationwide.

Powers store, e-commerce, and supply chain analytics with Doris for daily and real-time decision making.

Luckin Coffee is the world's largest coffee chain by store count, with more than 31,000 outlets serving tens of millions of daily customers.

Uses Doris for store, order, and membership analytics to track campaign performance across thousands of outlets.

Meituan is China's largest local-services platform, connecting hundreds of millions of users with food delivery, travel, and on-demand retail every day.

Runs city-scale business analytics over orders, merchants, riders, and user activity for live decision support.

MINISO is a fast-growing global lifestyle and IP retailer, operating over 8,000 stores across more than 110 countries and regions worldwide.

Powers SKU-level sales, inventory, and supply-chain reporting across global retail operations.

Samsung is the world's largest electronics manufacturer, leading in smartphones, memory chips, displays, and home appliances across more than 70 countries.

Uses Apache Doris for device telemetry and service analytics across consumer electronics product lines.

NetEase is China's second-largest gaming company and a major internet group spanning hit titles, music streaming, education, and intelligent enterprise services.

Uses Apache Doris for game and content analytics, helping teams inspect live metrics without long batch delays.

Tencent Music Entertainment is China's leading online music and audio platform, reaching over 500 million monthly users across QQ Music, Kugou, and Kuwo.

Uses Doris for fast aggregation over user listening, content, and engagement data to power live music analytics.

SF Express is the largest integrated logistics provider in China and Asia, and ranks among the top four globally by revenue.

Tracks shipment, route, and operations data in near real-time to support nationwide express delivery.

ZTO Express is China's largest express delivery company by parcel volume, handling more than 34 billion parcels annually and leading the market for nine consecutive years.

Uses Doris for parcel flow, sorting hub, and last-mile analytics across the express delivery network.

Cainiao is Alibaba's global smart-logistics arm and a top-tier cross-border parcel network, serving e-commerce and supply-chain customers in more than 200 countries.

Analyzes warehouse, route, and cross-border logistics data to optimize fulfillment operations.

Suzuki Motor is a top-10 global automaker and the dominant car brand in India and Southeast Asia, also famous for its motorcycles, ATVs, and outboard engines.

Uses Apache Doris for manufacturing, dealer, and service analytics across global automotive operations.

ANTA Sports is China's largest sportswear group and a global top-three player by revenue, with a brand portfolio spanning ANTA, FILA, Descente, and Arc'teryx.

Runs retail, channel, and consumer analytics to inform product and marketing decisions across sportswear brands.

Xtep is a top-five Chinese sportswear brand and the country's number-one running label, sponsoring marathons and dressing millions of runners worldwide.

Uses Doris for membership, sales, and campaign analytics across retail and digital channels.

Talkie is a leading AI companion and character-chat app with over 11 million monthly active users, ranking among the most-downloaded AI apps in the United States.

Analyzes conversation, engagement, and growth signals for an AI character chat product at scale.

Horizon Robotics is China's leading autonomous-driving chipmaker, commanding over 40% of the domestic ADAS market and powering more than 100 vehicle models on the road.

Uses Doris to process driving data, sensor telemetry, and chip performance metrics for autonomous platforms.

Goldwind is the world's number-one wind turbine manufacturer, shipping nearly 30 GW of capacity in a single year and powering wind farms across six continents.

Powers wind turbine telemetry and operations analytics across renewable energy assets worldwide.

Advance Intelligence Group is Southeast Asia's leading AI-driven fintech, serving 40 million consumers and 235,000 merchants through brands like Atome and ADVANCE.AI.

Runs risk, credit, and transaction analytics for AI-driven fintech products across Southeast Asia.

TrueWatch is a next-generation cloud observability platform unifying metrics, logs, and traces across multi-cloud stacks for DevOps and SRE teams worldwide.

Powers a unified observability platform using Doris for metrics, logs, and traces at infrastructure scale.

Samsung is the world's largest electronics manufacturer, leading in smartphones, memory chips, displays, and home appliances across more than 70 countries.

Uses Apache Doris for device telemetry and service analytics across consumer electronics product lines.

NetEase is China's second-largest gaming company and a major internet group spanning hit titles, music streaming, education, and intelligent enterprise services.

Uses Apache Doris for game and content analytics, helping teams inspect live metrics without long batch delays.

Tencent Music Entertainment is China's leading online music and audio platform, reaching over 500 million monthly users across QQ Music, Kugou, and Kuwo.

Uses Doris for fast aggregation over user listening, content, and engagement data to power live music analytics.

SF Express is the largest integrated logistics provider in China and Asia, and ranks among the top four globally by revenue.

Tracks shipment, route, and operations data in near real-time to support nationwide express delivery.

ZTO Express is China's largest express delivery company by parcel volume, handling more than 34 billion parcels annually and leading the market for nine consecutive years.

Uses Doris for parcel flow, sorting hub, and last-mile analytics across the express delivery network.

Cainiao is Alibaba's global smart-logistics arm and a top-tier cross-border parcel network, serving e-commerce and supply-chain customers in more than 200 countries.

Analyzes warehouse, route, and cross-border logistics data to optimize fulfillment operations.

Suzuki Motor is a top-10 global automaker and the dominant car brand in India and Southeast Asia, also famous for its motorcycles, ATVs, and outboard engines.

Uses Apache Doris for manufacturing, dealer, and service analytics across global automotive operations.

ANTA Sports is China's largest sportswear group and a global top-three player by revenue, with a brand portfolio spanning ANTA, FILA, Descente, and Arc'teryx.

Runs retail, channel, and consumer analytics to inform product and marketing decisions across sportswear brands.

Xtep is a top-five Chinese sportswear brand and the country's number-one running label, sponsoring marathons and dressing millions of runners worldwide.

Uses Doris for membership, sales, and campaign analytics across retail and digital channels.

Talkie is a leading AI companion and character-chat app with over 11 million monthly active users, ranking among the most-downloaded AI apps in the United States.

Analyzes conversation, engagement, and growth signals for an AI character chat product at scale.

Horizon Robotics is China's leading autonomous-driving chipmaker, commanding over 40% of the domestic ADAS market and powering more than 100 vehicle models on the road.

Uses Doris to process driving data, sensor telemetry, and chip performance metrics for autonomous platforms.

Goldwind is the world's number-one wind turbine manufacturer, shipping nearly 30 GW of capacity in a single year and powering wind farms across six continents.

Powers wind turbine telemetry and operations analytics across renewable energy assets worldwide.

Advance Intelligence Group is Southeast Asia's leading AI-driven fintech, serving 40 million consumers and 235,000 merchants through brands like Atome and ADVANCE.AI.

Runs risk, credit, and transaction analytics for AI-driven fintech products across Southeast Asia.

TrueWatch is a next-generation cloud observability platform unifying metrics, logs, and traces across multi-cloud stacks for DevOps and SRE teams worldwide.

Powers a unified observability platform using Doris for metrics, logs, and traces at infrastructure scale.

The fastest end-to-end engine from ingestion to insight.

Real-time analytics, applied directly to your open lakehouse.

First-class search across structured, text, and vector data.

MySQL

MySQL PostgreSQL

PostgreSQL Kafka

Kafka Pulsar

Pulsar Iceberg

Iceberg Delta Lake

Delta Lake Hudi

Hudi

Superset

Superset Metabase

Metabase AI Agents

AI Agents MCP

MCP Grafana

Grafana Litefuse

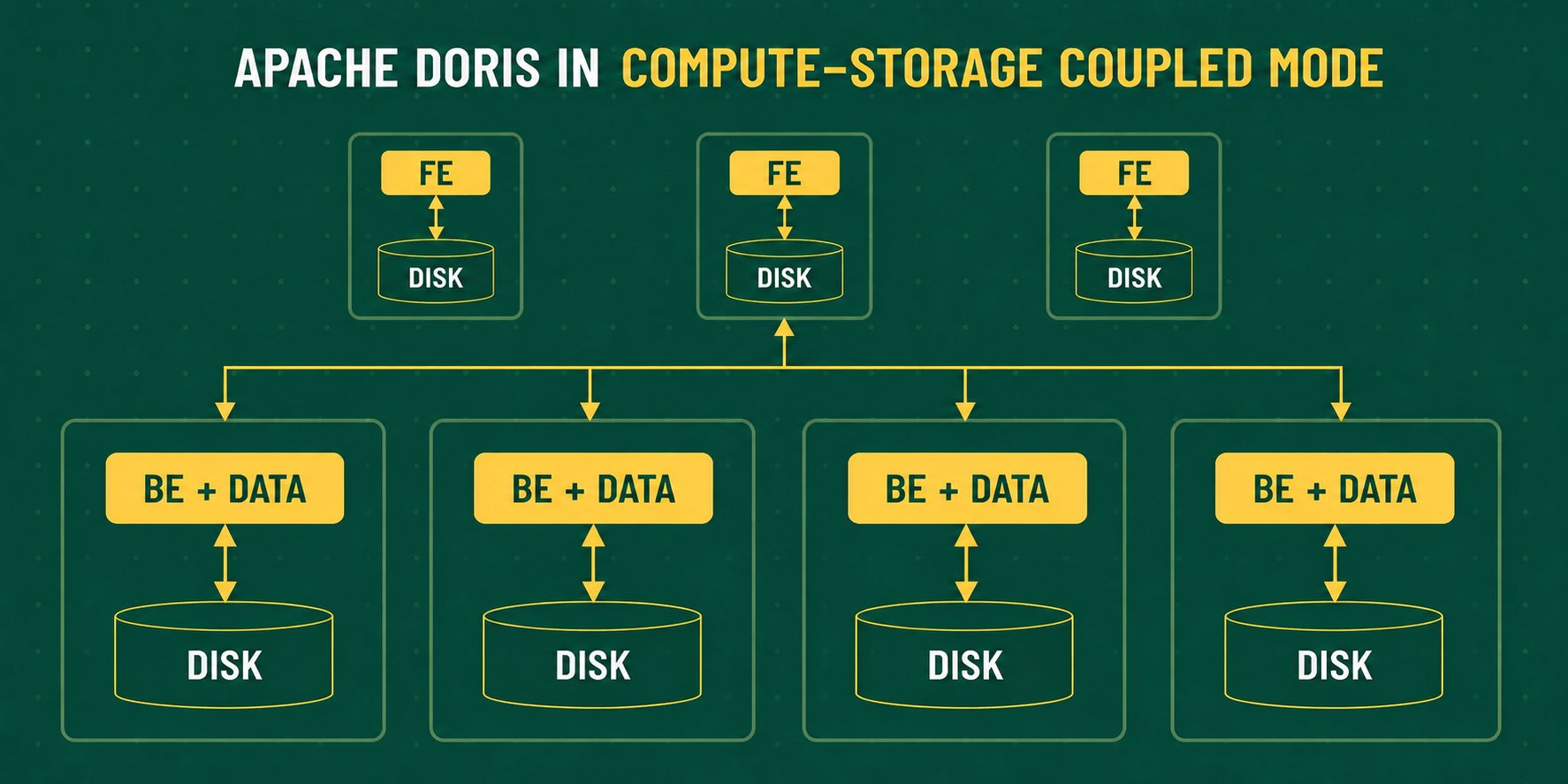

LitefuseClassic MPP, with compute and storage co-located on each node for maximum local I/O and the lowest query latency.

Deploy Now!

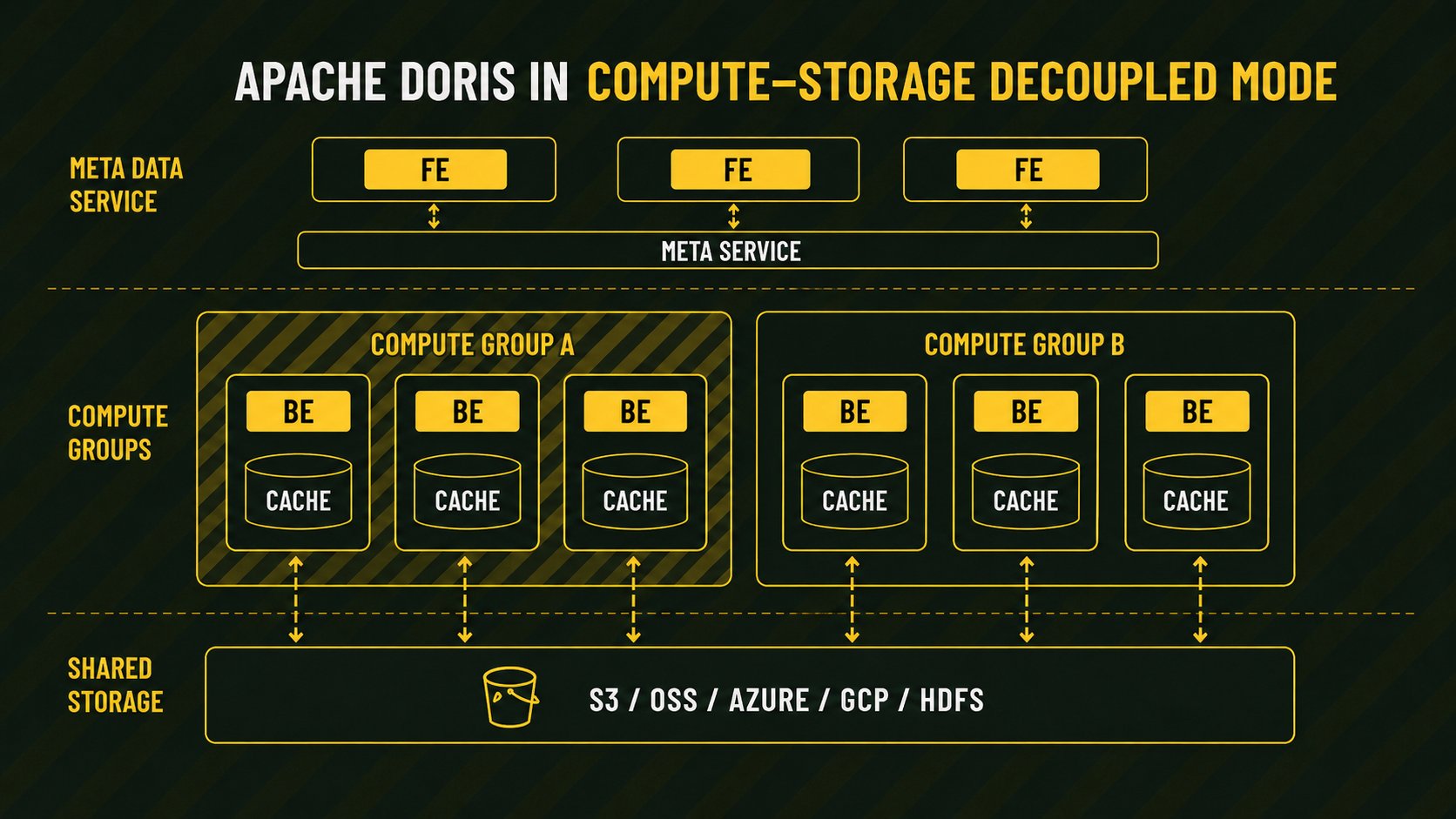

Cloud-native architecture with stateless compute groups over shared object storage. Scale compute on demand and isolate workloads from one another.

Deploy Now!